The C-Suite Question That Haunts Every Data Leader

It’s a question that echoes in boardrooms and budget meetings: “We’ve invested millions in data platforms, talent, and tools. What are we actually getting for it?” For years, a confident smile and phrases like “data-driven culture” or “actionable insights” might have sufficed. By 2026, they won’t. As data analytics matures from a niche capability to a core business function, the demand for quantifiable Return on Investment (ROI) is no longer a suggestion; it’s a mandate.

The landscape of data is evolving at an unprecedented pace. As we explore in our comprehensive guide, Data Analytics in 2026: The Ultimate Guide for Business Leaders, the fusion of AI, real-time processing, and democratized access is creating immense potential. But with great potential comes great accountability. Simply generating insights is not enough. The future belongs to organizations that can systematically translate those insights into measurable impact and articulate that value in the language of the business: dollars and cents.

This article moves beyond the theoretical. We’ll dissect the shortcomings of traditional ROI calculations for complex data initiatives and provide a modernized, multi-layered framework designed for the realities of 2026. This is your playbook for turning your data analytics function from a cost center into a proven value-creation engine.

Why Traditional ROI Models Fall Short for Modern Analytics

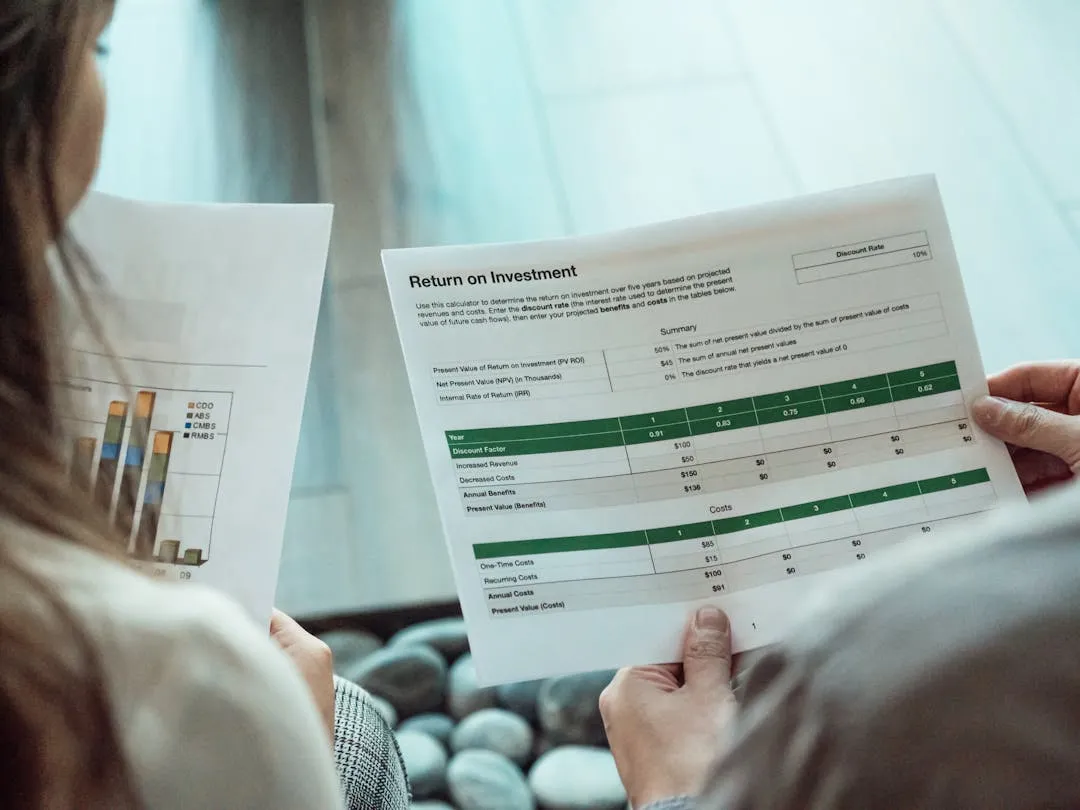

The classic ROI formula—(Gain from Investment - Cost of Investment) / Cost of Investment—is beautifully simple. It works perfectly when buying a new piece of manufacturing equipment with a predictable output. But applying this rigid formula to a dynamic, evolving capability like data analytics is like trying to measure the ocean with a thimble. It misses the bigger picture.

The Fallacy of Direct Attribution

A new marketing analytics dashboard doesn't directly generate revenue. It provides insights that a marketing manager uses to launch a more effective campaign, which in turn generates revenue. The value chain is elongated and often collaborative. Ascribing 100% of the campaign's success to the dashboard ignores the marketer's expertise, the creative content, and market conditions. The value is real, but its attribution is complex.

The Challenge of Intangible and Defensive Benefits

How do you quantify the value of a decision *not* made? A robust risk analytics model might prevent your company from entering a volatile market, saving millions in potential losses. A data governance program might prevent a multi-million dollar regulatory fine. These “defensive” plays are enormously valuable but rarely appear on a profit and loss statement. They represent value through avoided costs, which is notoriously difficult to track.

The Foundational Investment Problem

Much of the initial investment in data analytics goes into foundational elements: a cloud data warehouse, ETL pipelines, governance frameworks, and hiring a core team. These investments don't produce immediate returns. Instead, they build the capability for all future analytics projects. Attributing the cost of the entire data warehouse to the first dashboard built on it would yield a disastrous ROI. This foundational work enables speed, scale, and trust for years to come, creating a long-tail return that simple formulas ignore.

A Modernized Framework for Measuring Analytics ROI in 2026

To accurately capture the value of data analytics, we need a more sophisticated, tiered approach. At Ansivus, we guide our clients to think about ROI across three distinct categories, moving from the easily quantifiable to the strategically vital. A mature organization in 2026 will measure and report on all three.

Category 1: Direct Financial Impact (The Hard Numbers)

This is the most straightforward category and the best place to start building credibility. These are initiatives where a clear, causal link can be drawn between an analytics output and a financial outcome.

- Revenue Generation: These projects directly increase top-line growth. Examples include:

- Price Optimization: A B2B SaaS company used historical deal data and customer segmentation to create a dynamic pricing model. Result: An average revenue uplift of 7% per new customer without impacting churn.

- Churn Prediction: A telecom provider built a machine learning model to identify customers at high risk of churning. Proactive, targeted retention offers were then deployed. Result: A 15% reduction in voluntary churn, preserving an estimated $12M in annual recurring revenue.

- Next-Best-Action Models: An e-commerce platform implemented a recommendation engine that personalized product suggestions in real-time. Result: A 5% increase in average order value and a 10% increase in conversion rates for customers who interacted with the recommendations.

- Cost Reduction: These projects directly improve the bottom line by eliminating waste and inefficiency. Examples include:

- Supply Chain Optimization: A CPG company used analytics to optimize inventory levels and routing logistics. Result: A 20% reduction in carrying costs and a 12% decrease in shipping expenses, totaling $8M in annual savings.

- Fraud Detection: A financial services firm deployed an AI-powered anomaly detection system to flag fraudulent transactions in real-time. Result: A 60% reduction in fraud-related losses, saving over $25M in its first year.

- Predictive Maintenance: A heavy manufacturing company attached IoT sensors to critical machinery, using the data to predict failures before they occurred. Result: A 40% decrease in unplanned downtime and a 25% reduction in maintenance costs.

Category 2: Operational Efficiency Gains (The Soft-to-Hard Numbers)

This category quantifies the value of doing things faster, smarter, and with fewer errors. The key is to convert these operational gains into a financial equivalent.

- Time Savings & Automation: This is about freeing up your most valuable resource: your people's time. The formula is simple but powerful:

(Hours Saved per Employee) x (Number of Employees) x (Fully-Loaded Hourly Employee Cost) = Annual Value. - Example: A finance department automated its manual month-end reporting process, which took 10 analysts an average of 20 hours each (200 total hours). By automating 90% of this work, they saved 180 hours per month. At a loaded cost of $75/hour, that's a direct productivity gain of $13,500 per month or $162,000 per year. That time can now be reallocated to higher-value analysis.

- Process Improvement & Error Reduction: Data-driven processes are more consistent and accurate. Measuring this involves tracking key process metrics before and after an initiative.

- Example: An insurance company used a natural language processing (NLP) model to automate the initial review of claims documents. This reduced the manual error rate in data entry from 4% to 0.5%. This not only cut down on rework costs but also accelerated claim processing times, improving customer satisfaction (CSAT) scores by 10 points.

Category 3: Strategic & Intangible Value (The Long-Term Game)

This is the most challenging category to measure but often holds the greatest long-term value. In 2026, articulating this value will separate leading data organizations from the rest. It requires moving from direct measurement to credible estimation and correlation.

- Risk Mitigation: Quantify the value of avoiding negative outcomes. The framework is:

Value = (Estimated Financial Impact of Event) x (Reduction in Probability of Event). - Example: A healthcare provider is subject to HIPAA regulations, with potential fines up to $1.5M per violation. By implementing a data access monitoring system, they reduced the probability of a significant data breach (based on audit findings) from 5% to 1% annually. The calculated value of this risk mitigation is ($1.5M * 4%) = $60,000 per year, not including reputational damage.

- Enhanced Decision-Making: This is the core promise of analytics. We can measure this through controlled experiments (A/B testing) or by tracking the performance of data-informed decisions versus gut-feel decisions over time.

- Example: A retail company traditionally relied on merchant intuition for product markdowns. They introduced a data-driven markdown optimization tool for one category of products (the test group) while continuing the old method for another (the control group). After one quarter, the test group achieved a 3% higher gross margin, providing a clear financial lift for better decision-making.

- Innovation & New Opportunities: Data can be the seed for entirely new products or business models. While direct ROI is impossible initially, you can track the pipeline of ideas generated from data exploration and attribute a portion of the eventual revenue from successful launches back to the originating insight.

- Customer Experience (CX) & Brand Equity: Link analytics initiatives to key CX metrics like Net Promoter Score (NPS) or Customer Lifetime Value (CLV). First, prove that your analytics project improved the metric (e.g., a personalization engine increased NPS by 5 points). Then, use historical data to correlate that metric to financial outcomes (e.g., “For every 1-point increase in NPS, we see a 0.5% decrease in churn”).

Building the Business Case: A Practical Roadmap for 2026

A framework is only useful if you can implement it. Here’s a step-by-step approach to building a robust ROI measurement capability within your organization.

Step 1: Start with the Business Objective, Not the Technology

Never start a project with “we need to build a data lake” or “we should use AI.” Start with “we need to reduce customer churn by 10%” or “we need to improve production line efficiency by 5%.” A clearly defined, measurable business goal is the foundation of any ROI calculation. Every analytics project should have a one-sentence charter that links it to a business KPI.

Step 2: Establish Baselines and Control Groups

You cannot prove you improved something if you don’t know its starting point. Before launching any initiative, meticulously document the baseline metrics. How many hours does the manual process take? What is the current customer conversion rate? What is the current machine failure rate? Where possible, use control groups (a segment of the business that doesn't get the new analytics solution) to isolate the impact of your work from other market factors.

Step 3: Implement a Tiered Measurement System

Don't force every project into a hard ROI calculation. Use a “portfolio” approach. Projects in Category 1 (Direct Financial Impact) should have a rigorous, CFO-approved ROI model. Projects in Category 2 (Operational Efficiency) can use standardized productivity value calculations. Projects in Category 3 (Strategic Value) should be measured via a balanced scorecard that includes a mix of financial proxies, operational KPIs, and strategic milestones.

Step 4: Attribute Value Across the Data Value Chain

Recognize that value is a team sport. A successful outcome depends on clean data from engineers, an accurate model from data scientists, a clear dashboard from BI developers, and a smart action from a business user. Develop a simple attribution model (e.g., 20% of the value to the platform, 30% to the model, 50% to the business action) to ensure everyone who contributes to the value creation is recognized. This fosters a collaborative culture.

Step 5: Communicate and Iterate Relentlessly

ROI measurement is not a one-time report; it's a continuous process. Build an “Analytics Value” dashboard for leadership. Track leading indicators (e.g., user adoption of a new tool) and lagging indicators (the ultimate financial impact). Hold quarterly reviews to assess the realized value of completed projects and forecast the expected value of new ones. This transparency builds trust and transforms the data team from a service provider into a strategic partner.

The Tech Stack's Role in Accelerating ROI Measurement

By 2026, the technology itself will play a bigger role in proving its own worth. Look for platforms that have ROI measurement baked in:

- Cloud Cost Management Tools: Modern tools allow you to tag and allocate cloud computing and storage costs directly to specific analytics projects or business units, making the “Cost of Investment” part of the equation far more accurate.

- Data Observability Platforms: These tools monitor the health of your data pipelines. By quantifying “data downtime” (periods where data is missing, inaccurate, or latent) and its business impact, they provide a clear ROI for data quality initiatives.

- MLOps Platforms: These platforms track the performance of machine learning models in production. By monitoring model accuracy and drift, they can directly link a model’s health to the business outcome it drives, alerting you when a degrading model is putting value at risk.

Conclusion: From Justification to Strategic Advantage

Measuring the ROI of data analytics in 2026 will be a hallmark of a truly mature, data-driven organization. It requires a fundamental shift in mindset—from viewing data as a technical asset to managing it as a portfolio of value-generating investments.

Moving beyond a single, simplistic formula to a comprehensive, tiered framework allows you to capture the full spectrum of value your team creates. It enables you to speak the language of the CFO, justify future investments, and, most importantly, focus your limited resources on the initiatives that will drive the most significant impact.

The organizations that master this discipline won't just be justifying their existence; they'll be creating a powerful feedback loop where proven value fuels further investment, accelerating their journey to market leadership. They will have successfully transitioned from merely generating insights to consistently delivering measurable, undeniable impact.